How to Audit and Optimize Your Bot Management Strategy for Agentic AI Crawlers in 2026

How to Audit and Optimize Your Bot Management Strategy for Agentic AI Crawlers in 2026

With over 500 million weekly ChatGPT users and AI search now accounting for 35% of all queries in early 2026, the way search engines discover and index content has fundamentally changed. Yet here's a startling reality: 47% of websites are still operating with outdated robots.txt policies designed for traditional crawlers, while agentic AI systems like GPT-4, Claude, and Gemini's web crawlers require entirely different optimization approaches.

The surge in LLMs.txt adoption—up 340% since late 2024—signals a critical shift in how content creators communicate with AI systems. But are you ready for this new paradigm?

The New Reality of AI Crawling in 2026

Traditional SEO assumed search engines would crawl, index, and rank your content for human searchers. Today's agentic AI crawlers operate differently:

This shift means your 2023-era bot management strategy might be actively hindering your AI visibility.

Understanding LLMs.txt vs. Traditional Robots.txt

What LLMs.txt Offers

LLMs.txt is a new standard that allows websites to communicate directly with AI training systems and crawlers. Unlike robots.txt, which simply blocks or allows access, LLMs.txt provides:

The Problem with Outdated Robots.txt Policies

Many websites still use robots.txt files from 2022-2023 that:

Conducting Your Bot Management Audit

Step 1: Analyze Your Current Robot Policies

Start by examining your robots.txt file:

Example of outdated policy

User-agent: *

Crawl-delay: 10

Disallow: /admin/

Disallow: /api/

Disallow: /search/

Look for:

Step 2: Identify AI Crawler Traffic

In your analytics, look for these common AI crawler user agents:

GPTBot (OpenAI)ChatGPT-User (ChatGPT browsing)ClaudeBot (Anthropic)Bingbot (Microsoft/Bing Chat)PetalBot (Huawei AI)Analyze their crawling patterns:

Step 3: Evaluate Content Accessibility

Agentic AI crawlers need access to:

Use tools like Citescope Ai's GEO Score analyzer to evaluate how well your content serves AI crawlers across these dimensions.

Optimizing for Agentic AI Crawlers

Implementing Smart Bot Management

1. Create Tiered Access Policies

Differentiate between crawler types:

AI Training Crawlers

User-agent: GPTBot

Crawl-delay: 1

Allow: /blog/

Allow: /resources/

Disallow: /private/

Search AI Crawlers

User-agent: ChatGPT-User

Crawl-delay: 1

Allow: /

Traditional Search

User-agent: Googlebot

Crawl-delay: 1

Allow: /

2. Implement LLMs.txt

Create an LLMs.txt file that specifies:

3. Optimize Server Response Times

AI crawlers often make multiple rapid requests. Ensure:

Content Structure Optimization

Make Content AI-Crawler Friendly:

Enhance Semantic Richness:

Advanced Strategies for 2026

1. Dynamic Content Serving

Implement systems that can serve different content versions based on the crawler:

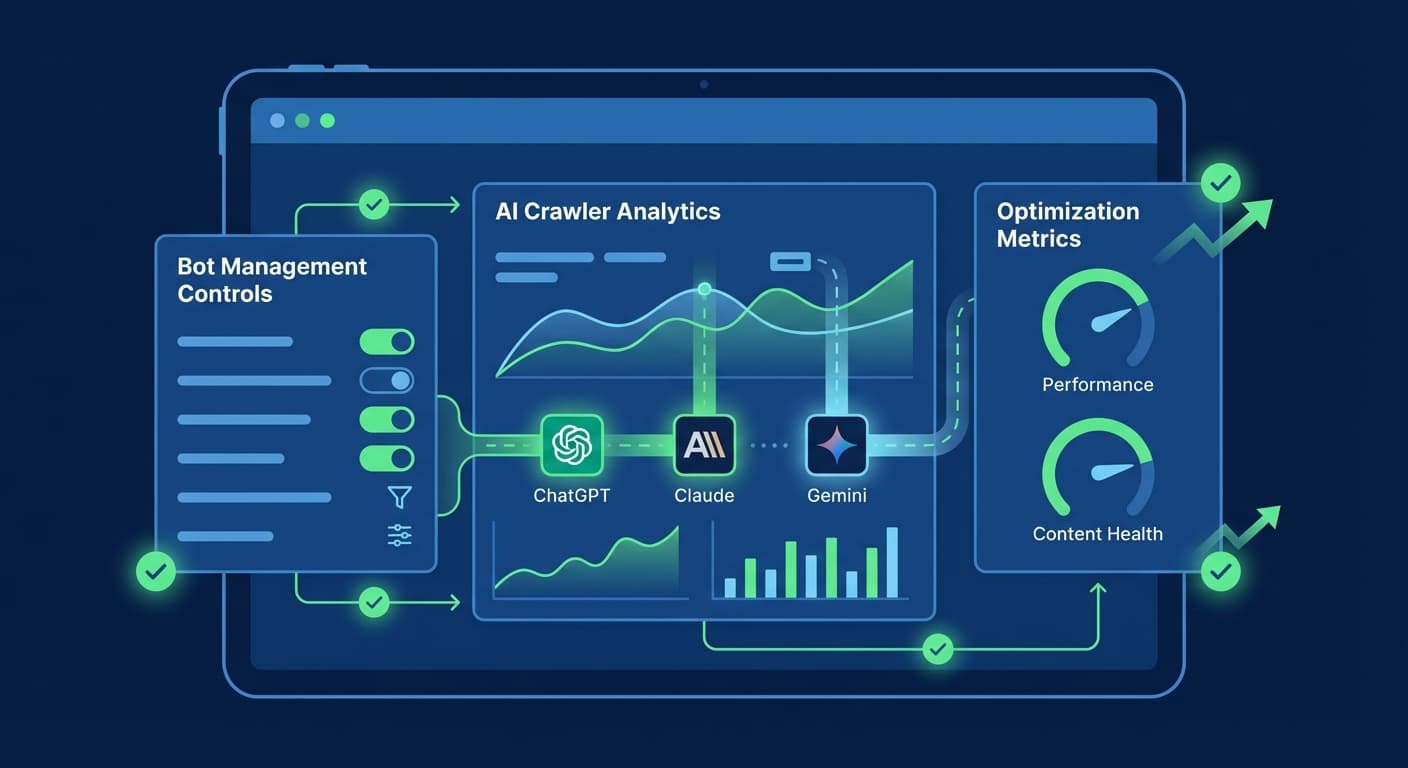

2. AI Crawler Analytics

Set up specialized tracking for AI crawler behavior:

3. Proactive Content Signals

Use emerging signals to help AI crawlers understand your content:

How Citescope Ai Helps

Citescope Ai's platform addresses the complexities of AI crawler optimization through:

The platform's analytics help you understand which pages AI crawlers prefer and why, enabling data-driven optimization decisions.

Common Pitfalls to Avoid

Measuring Success

Track these key metrics to gauge your bot management optimization:

Looking Ahead: Future-Proofing Your Strategy

As agentic AI systems become more sophisticated, expect:

The websites that adapt their bot management strategies now will have a significant advantage as AI search continues to grow.

Ready to Optimize for AI Search?

The shift to agentic AI crawlers isn't just coming—it's here. With 47% of websites still using outdated bot management strategies, now is the time to audit and optimize your approach.

Citescope Ai makes this transition seamless with comprehensive analysis tools, one-click optimization, and real-time citation tracking. Start with our free tier to analyze your first 3 pieces of content and see how well they serve today's AI crawlers.

[Try Citescope Ai Free →]

Don't let outdated bot policies hold back your AI visibility. The future of search is here—make sure your content is ready for it.