How to Automate AI Visibility Monitoring Across ChatGPT, Perplexity, and Google AI Overviews When Manual Tracking Becomes Impossible at Enterprise Scale

How to Automate AI Visibility Monitoring Across ChatGPT, Perplexity, and Google AI Overviews When Manual Tracking Becomes Impossible at Enterprise Scale

With over 500 million weekly ChatGPT users and AI search now accounting for 35% of all online queries in 2025, enterprise businesses are facing a critical challenge: how do you monitor your brand's visibility across dozens of AI platforms when you're publishing hundreds of pieces of content monthly?

The days of manually checking whether your latest blog post got cited in a Perplexity response are long gone. When you're operating at enterprise scale—managing content for multiple brands, tracking competitor mentions, and optimizing across various AI search engines—manual monitoring becomes not just inefficient, but completely impossible.

The Enterprise AI Visibility Challenge

Enterprise content teams today are dealing with an unprecedented scale of content and platforms:

A recent survey of Fortune 500 marketing directors found that 78% struggle to track their AI visibility effectively, with most relying on sporadic manual checks that miss 85% of potential citations.

Why Manual Monitoring Fails at Scale

Time Investment Becomes Prohibitive

To manually check just one piece of content across the five major AI platforms requires approximately 30 minutes. For an enterprise publishing 100 pieces monthly, that's 50 hours of manual work—before considering competitive monitoring or historical analysis.

Inconsistent Coverage

Manual monitoring typically focuses on:

This leaves massive blind spots in your visibility data.

Human Error and Bias

Manual checkers often:

The Automated Solution: Key Components

Effective enterprise AI visibility monitoring requires several automated components working together:

1. Multi-Platform API Integration

While not all AI platforms offer direct APIs for citation tracking, successful automation combines:

2. Semantic Content Matching

Modern monitoring systems need to identify when your content is cited even when:

3. Competitive Intelligence Integration

Enterprise teams need to track:

4. Real-Time Alerting and Reporting

Automated systems should provide:

Implementation Strategy for Enterprises

Phase 1: Audit and Baseline (Weeks 1-2)

Phase 2: Tool Selection and Setup (Weeks 3-4)

When evaluating monitoring solutions, consider:

Phase 3: Process Integration (Weeks 5-6)

Advanced Monitoring Strategies

Query Diversification

Your monitoring should test multiple query types:

Geographic and Demographic Targeting

AI responses can vary by:

Enterprise monitoring should account for these variations, especially for global brands.

Historical Trend Analysis

Automated systems can reveal:

Common Implementation Challenges

Data Volume Management

Enterprise monitoring generates massive amounts of data. Successful implementations:

False Positive Management

Automated systems can flag irrelevant mentions. Address this through:

Cross-Team Coordination

AI visibility data impacts multiple teams:

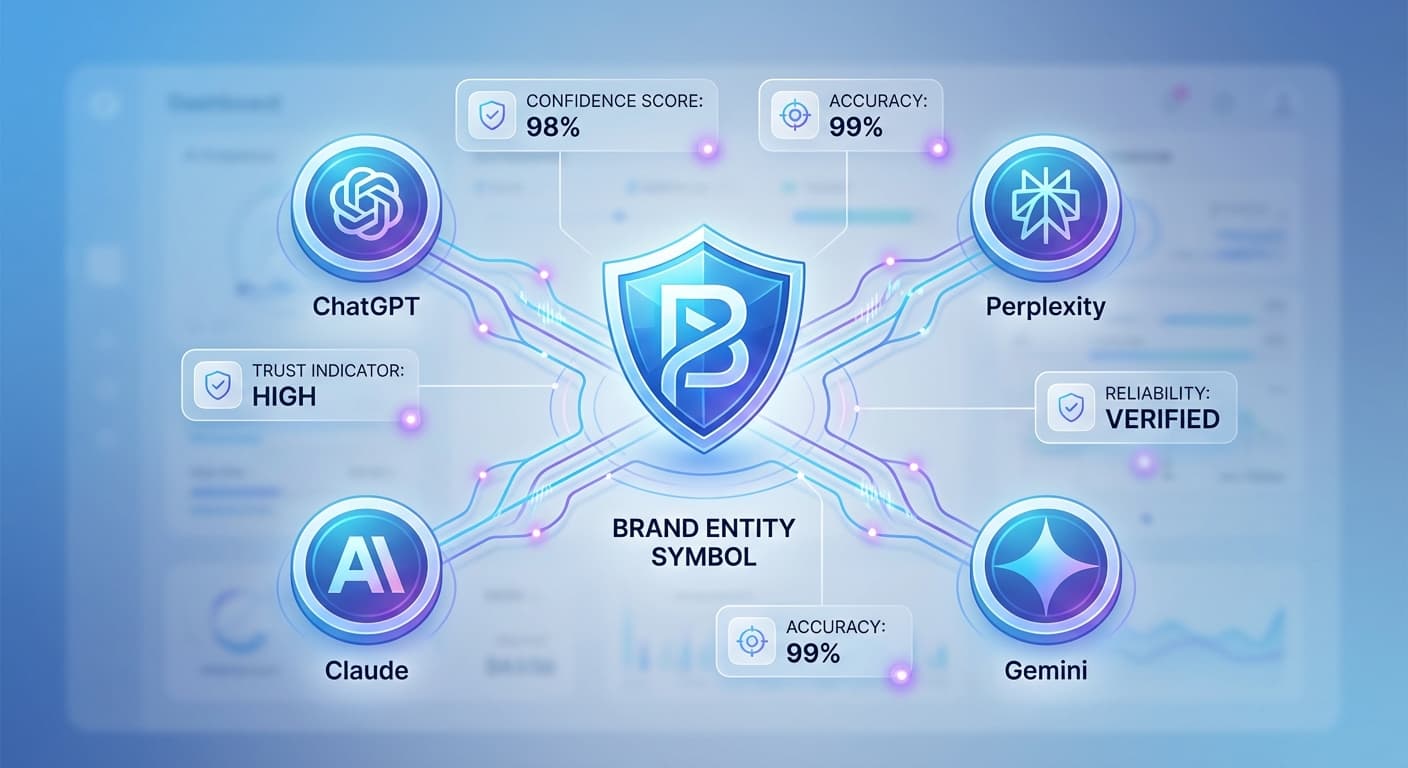

How Citescope Ai Helps

Citescope Ai's Citation Tracker addresses enterprise-scale monitoring challenges by providing:

The platform's GEO Score also helps optimize content for better AI visibility before publication, reducing the need for reactive monitoring.

Measuring ROI on Automated Monitoring

Successful enterprise implementations track:

Efficiency Metrics

Business Impact

Future-Proofing Your Monitoring Strategy

As AI search evolves, successful monitoring systems must be:

Ready to Optimize for AI Search?

Manual AI visibility tracking simply doesn't scale for enterprise content operations. As AI search continues to grow—with projections showing it will account for 50% of all searches by 2027—automated monitoring becomes essential for competitive content strategy.

Citescope Ai provides enterprise-grade AI visibility monitoring with comprehensive coverage across all major AI platforms. Start with our free tier to test the system with up to 3 content optimizations per month, or contact our team to discuss enterprise deployment for your organization. Don't let your competition dominate AI search while you're still checking citations manually.