How to Build a Hallucination Detection System When AI Search Engines Like ChatGPT and Perplexity Are Citing Your Brand Incorrectly and Costing You Sales

How to Build a Hallucination Detection System When AI Search Engines Like ChatGPT and Perplexity Are Citing Your Brand Incorrectly and Costing You Sales

A Fortune 500 SaaS company recently discovered that ChatGPT was telling 30% of users their pricing was $200/month higher than reality. The cost? An estimated $2.3 million in lost revenue over six months. Welcome to the hidden crisis of AI hallucinations – where search engines confidently cite your brand with completely fabricated information.

With AI search now handling over 35% of all queries in 2026 and ChatGPT alone serving 600 million weekly users, AI hallucinations about your brand aren't just an inconvenience – they're a business-critical threat that requires systematic detection and correction.

The Growing Crisis of Brand Misinformation in AI Search

AI hallucinations occur when language models generate confident-sounding but factually incorrect information. For brands, this manifests as:

Recent studies show that 23% of AI-generated responses about specific brands contain at least one factual error, with pricing and feature information being the most commonly hallucinated elements.

Why Traditional Brand Monitoring Falls Short

Conventional brand monitoring tools weren't designed for AI search engines. They typically focus on:

But AI engines don't just aggregate existing content – they synthesize and reinterpret information, creating novel combinations that can introduce errors even when source materials are accurate.

Building Your AI Hallucination Detection System: A Step-by-Step Framework

Step 1: Map Your Brand's Critical Information Points

Start by cataloging the facts AI engines should know about your brand:

Essential Brand Facts:

Create a "Brand Truth Database" – a comprehensive, regularly updated document that serves as your reference point for fact-checking AI responses.

Step 2: Develop Comprehensive Query Testing

Create systematic test queries that cover your brand from multiple angles:

Direct Brand Queries:

Comparison Queries:

Use Case Queries:

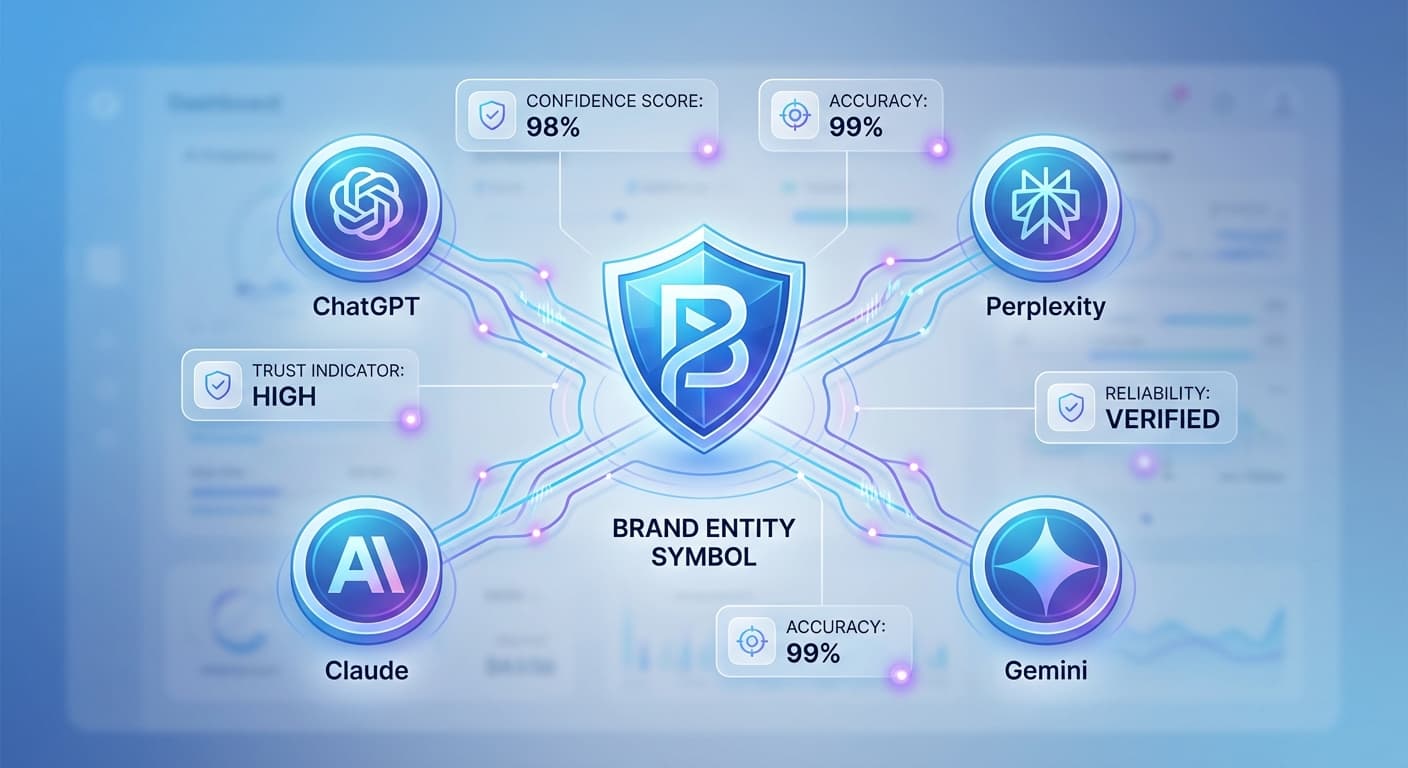

Run these queries across multiple AI engines – ChatGPT, Claude, Gemini, and Perplexity often provide different responses, and hallucinations may appear in some but not others.

Step 3: Implement Automated Monitoring

Set up systems to regularly test AI responses:

Daily Monitoring:

Weekly Deep Dives:

Monthly Audits:

Step 4: Create a Hallucination Severity Classification

Critical Hallucinations (Immediate Action Required):

Moderate Hallucinations (Address Within 48 Hours):

Minor Hallucinations (Weekly Review):

Advanced Detection Techniques

Semantic Consistency Checking

AI engines should provide consistent information about your brand across similar queries. Test variations like:

Inconsistencies often reveal hallucination patterns.

Citation Source Analysis

When AI engines provide sources (particularly Perplexity and Claude), verify:

Cross-Platform Validation

The same hallucination rarely appears across all AI platforms simultaneously. Use this to your advantage:

Rapid Response and Correction Strategies

Source Content Optimization

When you identify hallucinations, trace them back to their likely sources:

Strategic Content Distribution

Ensure your correct information reaches AI training pipelines:

Direct Engagement with AI Platforms

While not always successful, consider:

How Citescope Ai Helps with Hallucination Detection

While building manual detection systems is crucial, tools like Citescope Ai can streamline the process. The Citation Tracker monitors when your content gets cited by ChatGPT, Perplexity, Claude, and Gemini, allowing you to:

The GEO Score analysis also helps ensure your source content is structured in ways that reduce the likelihood of misinterpretation by AI engines.

Measuring the Business Impact

Track the effectiveness of your hallucination detection system through:

Leading Indicators:

Business Metrics:

Building Long-term Resistance to AI Hallucinations

Content Strategy Adjustments

Proactive Monitoring Culture

Make hallucination detection part of your regular marketing operations:

The Future of AI Hallucination Management

As AI search continues evolving, expect:

Staying ahead requires treating hallucination detection not as a one-time project, but as an ongoing operational necessity.

How Citescope Ai Helps

Citescope Ai provides the infrastructure for systematic AI search monitoring and optimization:

With pricing starting at free for 3 optimizations per month, you can begin building your hallucination detection system today.

Ready to Optimize for AI Search?

Don't let AI hallucinations damage your brand and cost you sales. Start monitoring how AI engines are citing your brand with Citescope Ai's Citation Tracker and GEO optimization tools. Get your first 3 content optimizations free and take control of your AI search presence today.