How to Build a Measurement Framework for LLM Visibility When Traditional Analytics Can't Track Brand Mentions Across ChatGPT, Perplexity, and Gemini

How to Build a Measurement Framework for LLM Visibility When Traditional Analytics Can't Track Brand Mentions Across ChatGPT, Perplexity, and Gemini

With over 500 million weekly active users on ChatGPT alone and AI search accounting for 35% of all queries in 2025, brand visibility in large language models (LLMs) has become a critical marketing metric. Yet 73% of brands admit they have no systematic way to track their mentions across AI platforms like ChatGPT, Perplexity, Claude, and Gemini.

The challenge is clear: traditional web analytics tools like Google Analytics can't see into the black box of AI responses. When someone asks ChatGPT "What are the best project management tools?" and your brand gets mentioned (or doesn't), there's no pixel to track, no referral traffic to measure, and no conversion path to analyze.

This visibility gap represents both a massive risk and opportunity. Brands that figure out LLM measurement first will have a significant competitive advantage in the AI-driven search landscape of 2026.

The LLM Visibility Problem: Why Traditional Metrics Fall Short

Traditional digital marketing measurement relies on trackable interactions: clicks, impressions, sessions, and conversions. But LLM interactions happen in closed environments where:

A recent study by AI Marketing Institute found that brands mentioned in AI responses see 23% higher brand recall than those that aren't, yet only 18% of marketers actively monitor their LLM presence.

The Four Pillars of LLM Visibility Measurement

To build an effective measurement framework, you need to track four core dimensions:

1. Citation Volume and Frequency

This measures how often your brand, products, or content gets referenced across different AI platforms and query types.

Key metrics to track:

2. Context Quality and Sentiment

Not all AI mentions are created equal. Being mentioned in a negative context or as a poor example hurts more than helps.

Key metrics to track:

3. Content Source Attribution

Understanding which of your content pieces drive AI citations helps optimize your content strategy.

Key metrics to track:

4. Query Intent and User Journey

Tracking what types of questions trigger your mentions reveals user intent and journey stages.

Key metrics to track:

Building Your LLM Measurement Stack: A Step-by-Step Framework

Step 1: Establish Baseline Measurements

Before you can improve, you need to know where you stand.

Week 1-2: Manual Audit

Week 3-4: Competitor Analysis

Step 2: Set Up Automated Monitoring

Manual checking doesn't scale. You need systematic monitoring across platforms.

Essential monitoring setup:

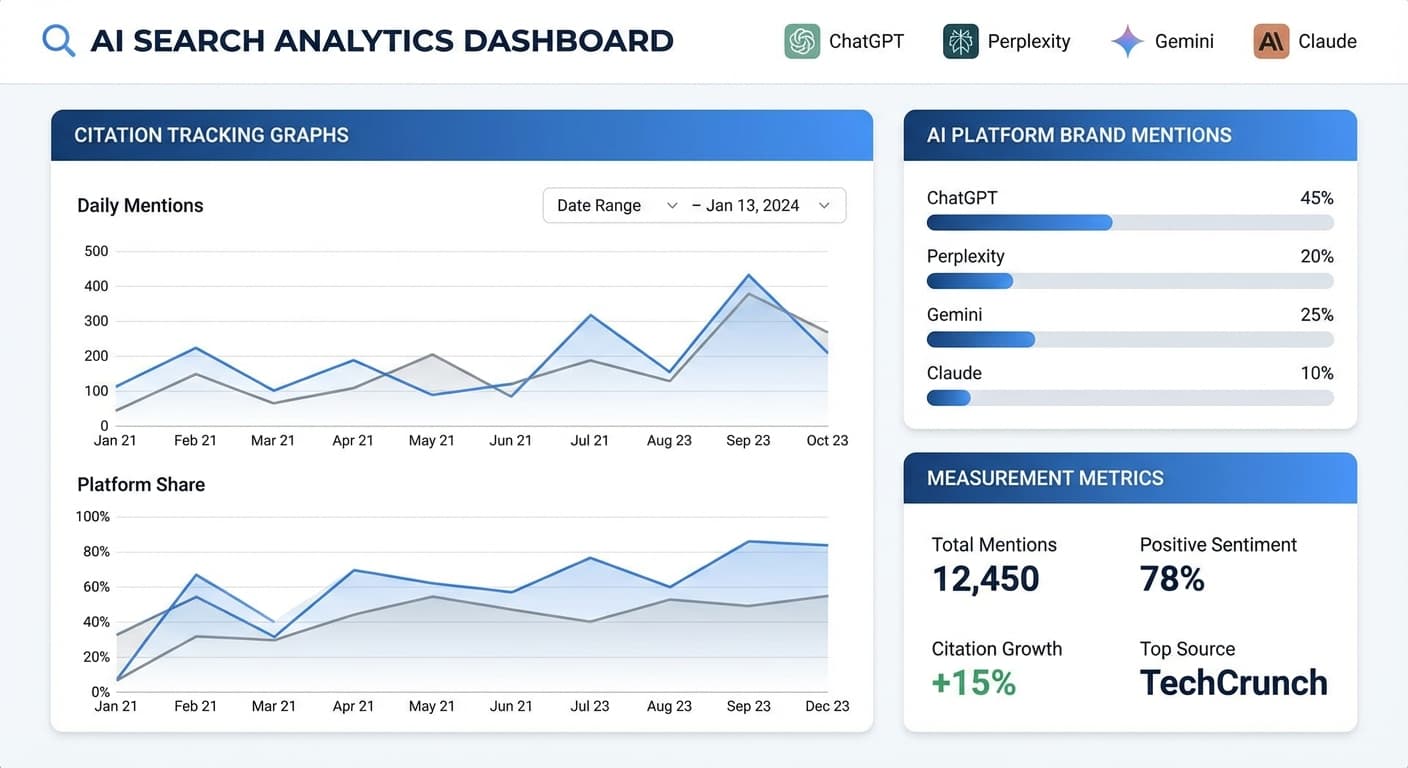

Tools like Citescope Ai's Citation Tracker can automate this process, monitoring your brand mentions across all major AI platforms and alerting you to changes in citation patterns.

Step 3: Create Response Templates and Testing Protocols

Standardize how you test and what you're looking for.

Query testing framework:

Step 4: Develop Attribution Models

Connect LLM mentions to business outcomes where possible.

Attribution strategies:

Advanced Measurement Techniques for 2026

Semantic Clustering Analysis

Group similar queries to understand topic ownership and identify content gaps.

Cross-Platform Journey Mapping

Track how mentions across different AI platforms influence the customer journey.

Predictive Citation Modeling

Use historical data to predict which content types and topics will drive future citations.

Real-Time Optimization Triggers

Set up alerts for sudden changes in mention patterns that require immediate content response.

Common Measurement Pitfalls to Avoid

Over-focusing on volume: A single high-quality mention in the right context beats 10 low-relevance mentions.

Ignoring negative mentions: Track and respond to negative context mentions as aggressively as you pursue positive ones.

Platform bias: Each AI platform has different strengths - don't assume ChatGPT performance predicts Perplexity performance.

Short-term thinking: LLM citation building is a long-term strategy - don't expect overnight results.

How Citescope Ai Helps Solve LLM Measurement Challenges

Building a comprehensive LLM measurement framework manually is time-intensive and error-prone. Citescope Ai's Citation Tracker automates the entire process, providing:

The platform integrates with your existing analytics stack, providing the missing piece of your measurement framework while saving hours of manual monitoring work.

Creating Your LLM Measurement Dashboard

Your measurement framework needs a clear dashboard that stakeholders can understand and act on.

Executive Dashboard (Monthly View)

Marketing Dashboard (Weekly View)

Content Dashboard (Daily View)

Measuring ROI from LLM Visibility Efforts

To justify investment in LLM optimization, connect visibility metrics to business outcomes:

Brand awareness correlation: Survey customers about AI tool usage and brand recall

Organic search lift: Measure increases in branded search volume following citation spikes

Content performance: Track traffic and engagement on content pieces that get cited

Customer acquisition: Use UTM parameters and surveys to track AI-influenced conversions

Future-Proofing Your Measurement Framework

The AI search landscape continues evolving rapidly. Build flexibility into your framework:

Ready to Optimize for AI Search?

Building an effective LLM visibility measurement framework requires the right tools and systematic approach. Citescope Ai provides everything you need to track, analyze, and optimize your brand's presence across all major AI platforms. Our Citation Tracker eliminates the guesswork, giving you clear visibility into your AI search performance with automated monitoring, competitor analysis, and actionable insights.

Start measuring your LLM visibility today with a free Citescope Ai account. Get 3 free content optimizations and see exactly how your brand performs in AI search results.