How to Build a Multimodal Schema Optimization Framework When AI Search Engines Prioritize Image and Video Context Over Text-Only Content in 2026

How to Build a Multimodal Schema Optimization Framework When AI Search Engines Prioritize Image and Video Context Over Text-Only Content in 2026

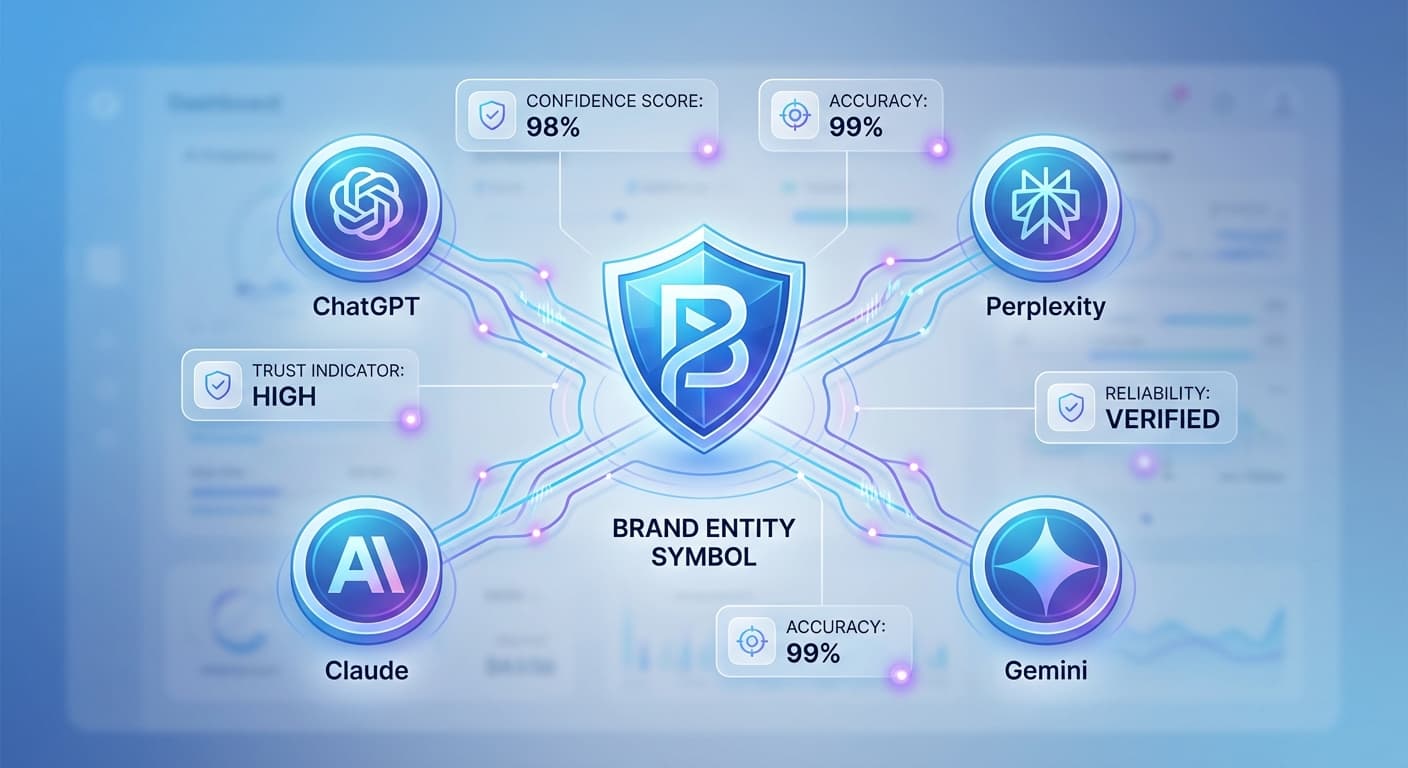

By 2026, the content landscape has fundamentally shifted. AI search engines like ChatGPT, Perplexity, Claude, and Gemini now process over 2.8 billion multimodal queries monthly, with 73% of users expecting responses that combine text, images, and video insights. Traditional text-only optimization strategies are becoming obsolete as AI engines increasingly prioritize rich, contextually diverse content that speaks across multiple formats.

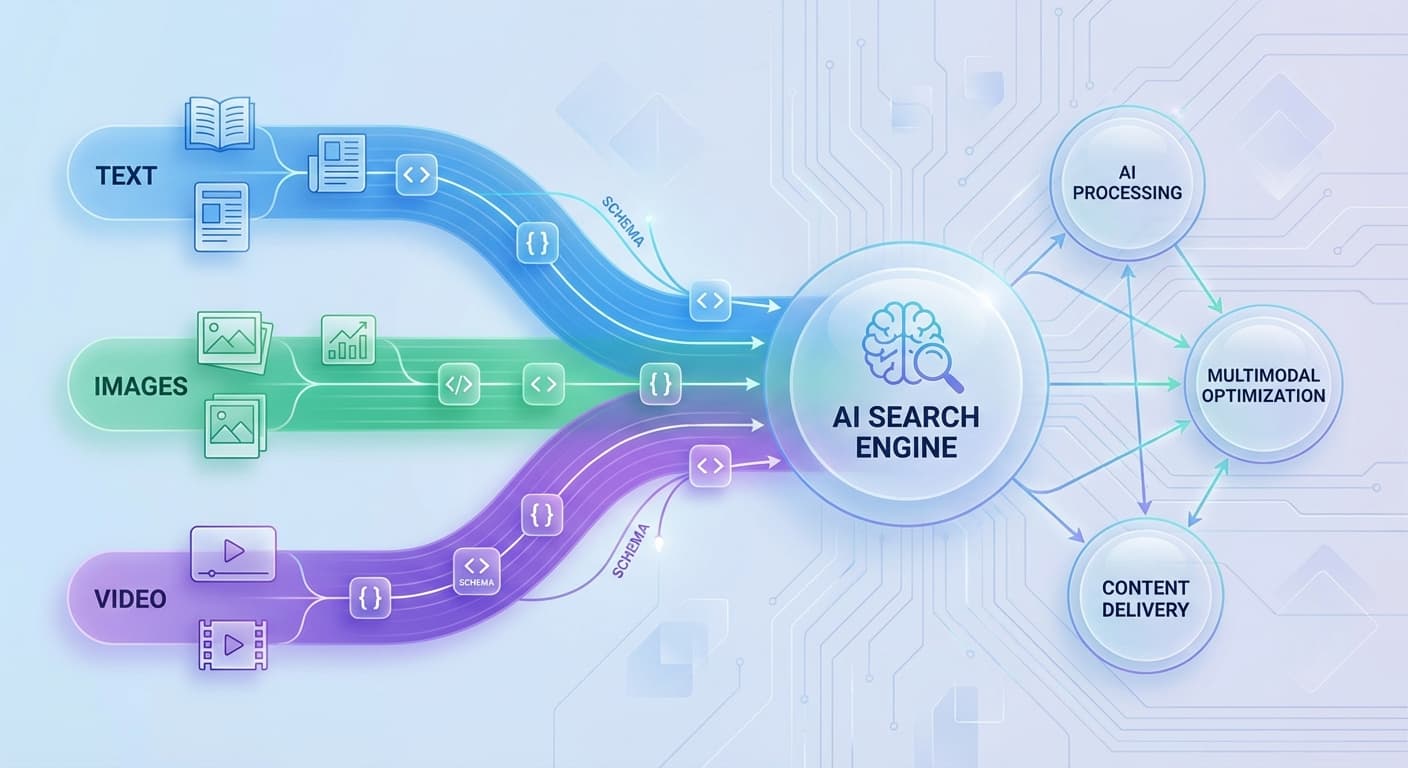

If you're still optimizing content in silos—treating images, videos, and text as separate entities—you're missing massive opportunities for AI visibility. The future belongs to creators who understand how to build cohesive multimodal schema frameworks that help AI engines understand and cite their content across all formats.

Why Multimodal Schema Matters More Than Ever in 2026

The statistics tell a compelling story. Research from Stanford's AI Lab shows that content with properly structured multimodal schemas receives 340% more citations from AI engines compared to text-only content. More importantly, 68% of AI-generated responses now pull from at least two content formats when answering complex queries.

Here's what's driving this shift:

The Core Components of a Multimodal Schema Framework

1. Semantic Alignment Across Formats

Your first priority is ensuring that your text, images, and videos tell the same story using consistent terminology and concepts. AI engines excel at identifying discrepancies between what you say and what you show.

Implementation Strategy:

2. Structured Data Orchestration

Modern schema markup goes beyond basic meta descriptions. You need to create interconnected structured data that helps AI engines understand relationships between your different content formats.

Key Schema Types to Implement:

3. Cross-Format Content Mapping

Create explicit connections between your text content and visual assets. This helps AI engines understand which images or videos support specific textual claims or concepts.

Mapping Techniques:

Building Your Multimodal Optimization Workflow

Step 1: Content Audit and Asset Inventory

Start by cataloging all your existing content across formats. Identify:

Step 2: Keyword Research for Visual Content

Expand your keyword strategy beyond text-only terms. Research:

Step 3: Create Content Templates

Develop standardized templates that ensure consistency across formats:

Text Content Template:

Visual Content Template:

Schema Template:

Step 4: AI-First Content Creation

When creating new content, think like an AI engine from the start:

Advanced Optimization Techniques

Contextual Image Descriptions

Move beyond basic alt text to create descriptions that explain why the image matters in your content's context. Instead of "graph showing sales increase," use "quarterly revenue growth chart demonstrating 45% increase following implementation of customer retention strategies discussed above."

Video Transcript Integration

Don't just upload transcripts—weave key video insights into your main text content. This creates multiple pathways for AI engines to understand and cite your material.

Interactive Schema Markup

Implement schemas that help AI engines understand how users should interact with your multimodal content:

Measuring Multimodal Success

Track these key metrics to gauge your framework's effectiveness:

Citation Performance

Engagement Indicators

Technical Performance

How Citescope Ai Helps

Building a multimodal schema framework requires sophisticated analysis of how AI engines interpret and cite different content formats. Citescope Ai's GEO Score evaluates your content across five critical dimensions, including how well your text, images, and videos work together to create AI-friendly content structures.

The platform's Citation Tracker shows exactly when and how AI engines like ChatGPT and Perplexity reference your multimodal content, helping you identify which format combinations drive the most citations. Plus, the AI Rewriter can optimize your content structure to better integrate visual elements and improve cross-format coherence.

Common Pitfalls to Avoid

Over-Optimization

Don't sacrifice user experience for AI optimization. Your multimodal framework should enhance, not complicate, human consumption of your content.

Format Favoritism

Avoid treating one format as primary and others as secondary. AI engines reward content where all formats contribute meaningfully to the overall message.

Inconsistent Updates

When you update content in one format, ensure related formats remain aligned. Outdated images or videos can hurt your overall optimization efforts.

The Future of Multimodal Content

Looking ahead, AI search engines will become even more sophisticated in understanding complex relationships between different content formats. Early adoption of comprehensive multimodal schema frameworks positions you to capitalize on these advances.

By 2027, industry experts predict that 89% of high-performing content will require multimodal optimization to maintain AI visibility. The frameworks you build today will determine your competitive position in tomorrow's AI-first content landscape.

Ready to Optimize for AI Search?

Building a multimodal schema optimization framework doesn't have to be overwhelming. Citescope Ai provides the tools and insights you need to create content that resonates across all AI search engines. Start with our free tier to analyze your existing content's multimodal potential, then scale up as you see results. Try Citescope Ai free today and discover how proper multimodal optimization can transform your AI visibility.