How to Build an LLMs.txt Bot Control Strategy When Robots.txt No Longer Governs AI Crawler Access

How to Build an LLMs.txt Bot Control Strategy When Robots.txt No Longer Governs AI Crawler Access

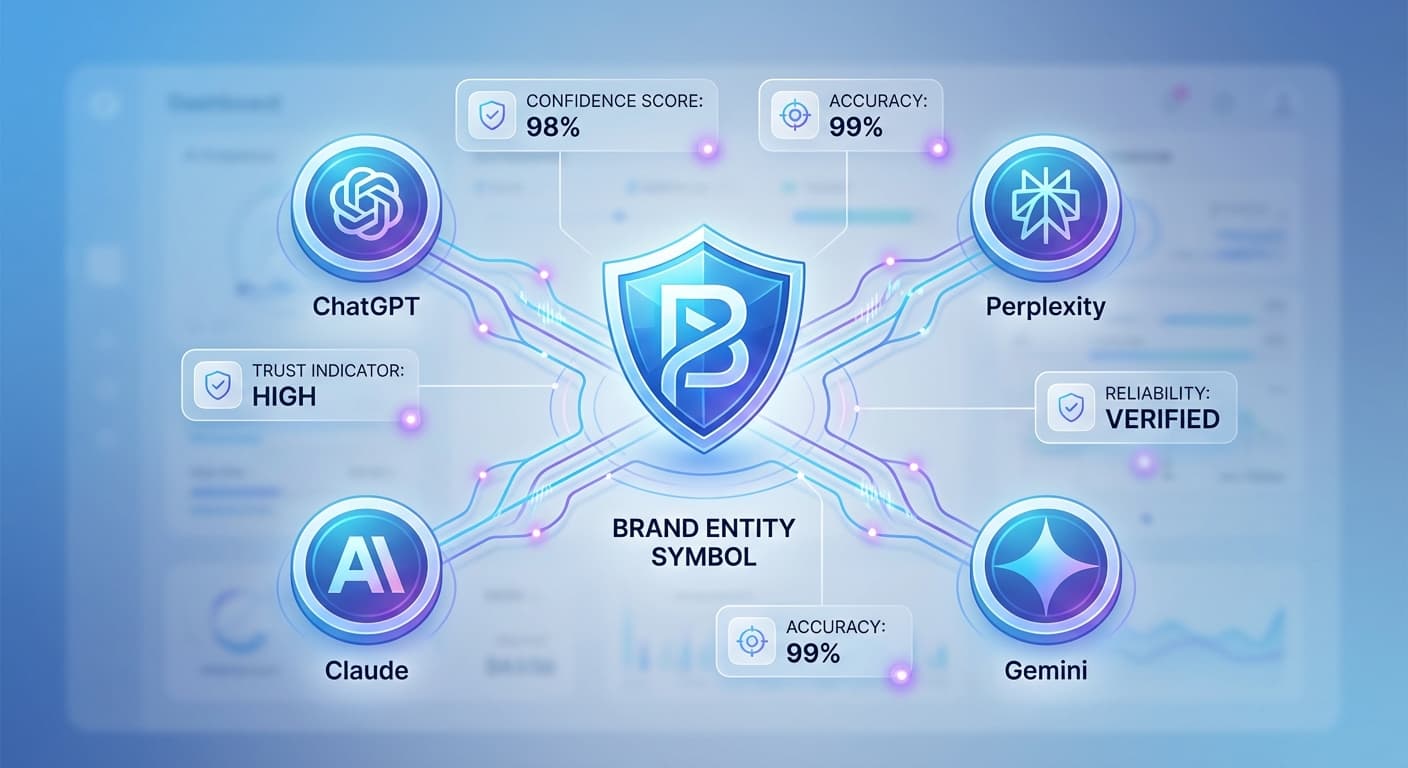

The digital landscape has fundamentally shifted. In 2026, with AI search queries now representing over 35% of all online searches and more than 600 million weekly active users across ChatGPT, Perplexity, Claude, and Gemini, the traditional robots.txt file has become obsolete for controlling AI crawler access. The emergence of LLMs.txt as the new standard for AI bot management isn't just a trend—it's become a necessity for any content creator serious about maintaining control over their digital assets.

The Death of Robots.txt in the AI Era

Robots.txt served us well for over two decades, but it was designed for a different internet. Traditional search engine crawlers followed predictable patterns and respected standardized directives. AI crawlers, however, operate under entirely different principles:

By early 2025, major AI platforms began implementing their own crawler protocols, making robots.txt directives largely ignored or misinterpreted. This shift has created a critical gap in content control that LLMs.txt is designed to fill.

Understanding LLMs.txt: The New Standard

LLMs.txt represents a paradigm shift from simple "allow/disallow" directives to comprehensive policy-based bot management. Unlike robots.txt, which uses basic pattern matching, LLMs.txt employs structured JSON formatting that AI systems can interpret with semantic understanding.

Core Components of LLMs.txt

1. AI Agent Identification

{

"agents": {

"ChatGPT": {

"access_level": "selective",

"allowed_content_types": ["articles", "guides"],

"attribution_required": true

}

}

}

2. Content Classification

{

"content_policies": {

"premium_content": {

"access": "restricted",

"licensing_required": true

},

"educational_content": {

"access": "open",

"attribution_format": "detailed"

}

}

}

3. Usage Context Controls

{

"usage_restrictions": {

"commercial_training": false,

"research_purposes": true,

"content_generation": "attribution_required"

}

}

Building Your LLMs.txt Strategy: A Step-by-Step Approach

Step 1: Content Audit and Classification

Before implementing LLMs.txt, conduct a comprehensive audit of your content assets:

Each category requires different access controls and attribution requirements.

Step 2: Define AI Agent Policies

Not all AI crawlers are created equal. Establish specific policies for different platforms:

Research-Focused Platforms (Claude, Perplexity):

Consumer-Focused Platforms (ChatGPT, Gemini):

Step 3: Implement Granular Access Controls

Modern LLMs.txt allows for sophisticated access control based on:

Step 4: Attribution and Licensing Framework

Establish clear attribution requirements:

{

"attribution_requirements": {

"minimum_citation": {

"source_url": true,

"author_name": true,

"publication_date": true

},

"preferred_citation": {

"full_title": true,

"publication_name": true,

"access_date": true

}

}

}

Advanced LLMs.txt Implementation Strategies

Dynamic Policy Updates

Unlike static robots.txt files, LLMs.txt can be updated dynamically based on:

Monitoring and Enforcement

Implement tracking mechanisms to monitor compliance:

This is where tools like Citescope Ai become invaluable, providing comprehensive citation tracking across all major AI platforms to ensure your LLMs.txt policies are being respected.

Integration with Content Management Systems

Modern CMS platforms are beginning to integrate LLMs.txt generation:

Common Implementation Mistakes to Avoid

Over-Restriction

While controlling access is important, being overly restrictive can hurt your content's discoverability in AI search results. Balance protection with visibility.

Inconsistent Policies

Ensure your LLMs.txt policies align with your broader content strategy and don't contradict other access controls.

Neglecting Updates

LLMs.txt isn't a "set it and forget it" solution. Regular updates are essential as AI platforms evolve and your content strategy changes.

Ignoring Attribution Tracking

Without proper monitoring, you can't verify if your policies are being respected or if you're receiving appropriate credit for your content.

How Citescope Ai Helps

While implementing LLMs.txt gives you control over AI crawler access, monitoring compliance and tracking citations requires specialized tools. Citescope Ai's Citation Tracker monitors when your content gets cited across ChatGPT, Perplexity, Claude, and Gemini, helping you:

The platform's GEO Score also helps you understand how well your content is structured for AI visibility, ensuring that your accessible content performs well in AI search results while your restricted content remains protected.

The Future of AI Bot Management

As we move deeper into 2026, expect to see:

Best Practices for Ongoing Management

Regular Policy Reviews

Schedule quarterly reviews of your LLMs.txt policies to ensure they align with:

Community Engagement

Participate in industry discussions about AI bot management standards. The LLMs.txt specification is still evolving, and content creator input is crucial for shaping its future.

Performance Optimization

Use analytics to optimize your strategy:

Ready to Optimize for AI Search?

The transition from robots.txt to LLMs.txt represents a fundamental shift in how we control AI access to our content. While implementing proper bot management policies is crucial, success in the AI search era requires more than just access control—you need comprehensive optimization and tracking.

Citescope Ai provides the complete toolkit for thriving in this new landscape, from content optimization with our AI Rewriter to comprehensive citation tracking across all major AI platforms. Start your free trial today and take control of your content's journey through the AI ecosystem.