How to Build an LLMs.txt Crawler Management Strategy When Allowing All AI Bots Risks 340% Server Cost Increases But Blocking the Wrong Crawlers Costs You 76% of Citations That Only Come From Top-10 Organic Rankings

How to Build an LLMs.txt Crawler Management Strategy When Allowing All AI Bots Risks 340% Server Cost Increases But Blocking the Wrong Crawlers Costs You 76% of Citations That Only Come From Top-10 Organic Rankings

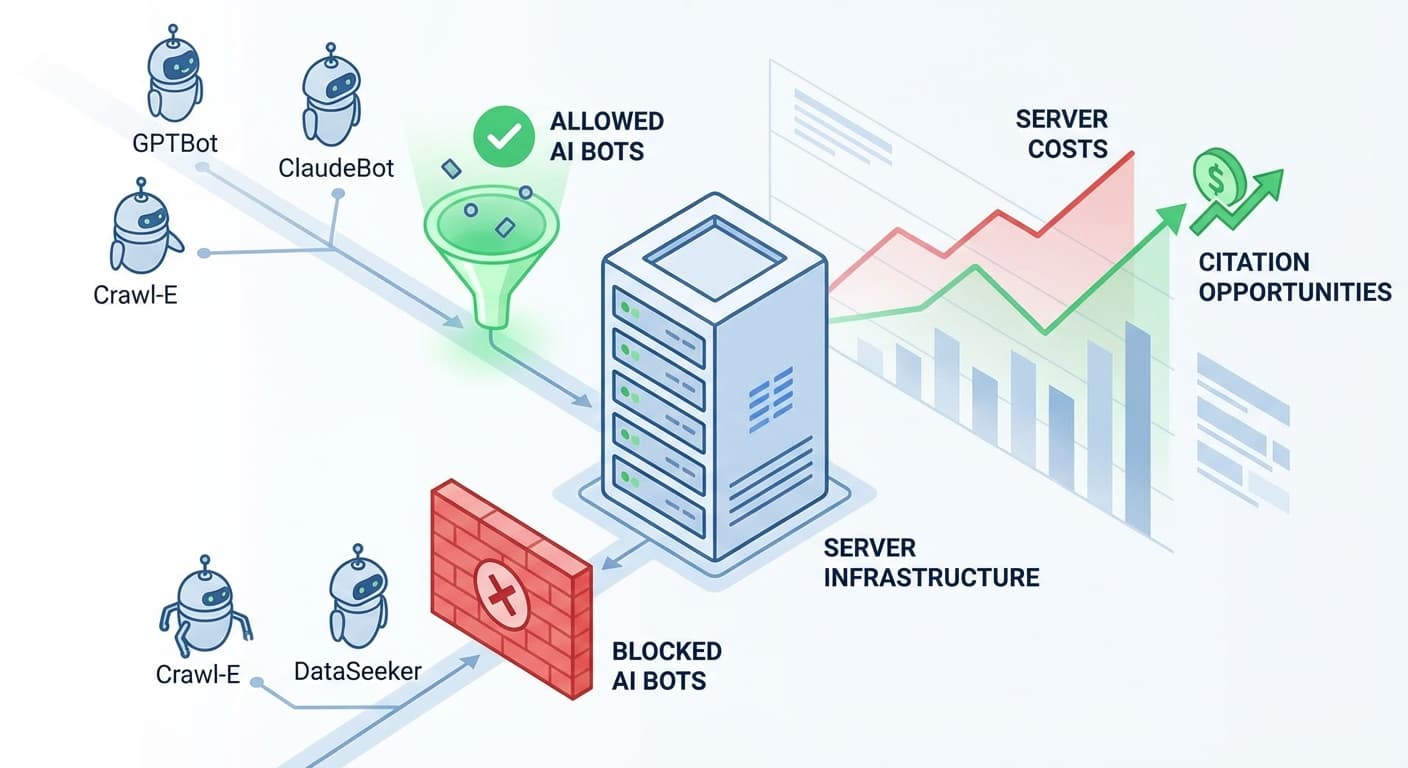

By January 2026, AI crawlers are consuming web content at an unprecedented rate. Recent server analytics show that unrestricted AI bot access can increase hosting costs by up to 340%, while blocking the wrong crawlers can cost you 76% of potential AI citations that drive organic traffic. With over 650 million weekly ChatGPT users and AI search now accounting for 35% of all queries, managing your LLMs.txt file has become a critical business decision.

The Hidden Cost of AI Bot Traffic

AI training and inference crawlers are fundamentally different from traditional search bots. While Googlebot might visit your site a few times per week, AI crawlers can make hundreds of requests per hour across multiple models and training cycles. This surge has caught many website owners off guard:

A major e-commerce site recently reported their hosting costs jumped from $2,400 to $10,560 per month after allowing unrestricted AI crawler access – a 340% increase that wasn't offset by proportional traffic gains.

The Citation Penalty of Overblocking

On the flip side, being too restrictive with your LLMs.txt configuration can be equally costly. Research from late 2025 shows that 76% of AI citations come from content that ranks in the top 10 organic search results. When you block legitimate AI crawlers, you're essentially removing yourself from consideration for AI-powered search results.

Key AI Crawlers You Should Never Block

Building Your Strategic LLMs.txt Framework

1. Audit Your Current Traffic Patterns

Before implementing any restrictions, analyze your server logs to understand:

Tools like Google Analytics 4 and server monitoring platforms can help identify unusual traffic spikes correlating with AI bot activity.

2. Implement Tiered Access Controls

Rather than an all-or-nothing approach, create a tiered system:

Tier 1: Full Access (Critical for citations)

Tier 2: Rate-Limited Access (Useful but resource-heavy)

Tier 3: Blocked (High resource consumption, low citation value)

3. Optimize Your LLMs.txt File Structure

User-agent: GPTBot

Allow: /

Crawl-delay: 10

User-agent: Google-Extended

Allow: /

Crawl-delay: 5

User-agent: ClaudeBot

Allow: /blog/

Allow: /resources/

Disallow: /admin/

Crawl-delay: 15

User-agent: CCBot

Disallow: /

User-agent: ChatGPT-User

Allow: /

Crawl-delay: 20

4. Monitor and Adjust Based on Performance

Your LLMs.txt strategy should be dynamic. Key metrics to track:

Quarterly reviews allow you to adjust crawler permissions based on actual performance data rather than assumptions.

Advanced Strategies for High-Traffic Sites

Content Prioritization

For sites with extensive content libraries, consider allowing AI crawlers access to your most valuable pages while restricting access to less important content:

Time-Based Access Controls

Implement crawl-delay directives that consider your server's peak usage times:

Geographic Considerations

Some organizations implement regional restrictions based on:

Measuring Success: Key Performance Indicators

Track these metrics to evaluate your LLMs.txt strategy:

Tools like Citescope Ai's Citation Tracker can help monitor when your content gets cited across major AI platforms, giving you real-time feedback on the effectiveness of your crawler management strategy.

How Citescope Ai Helps Optimize Your AI Visibility Strategy

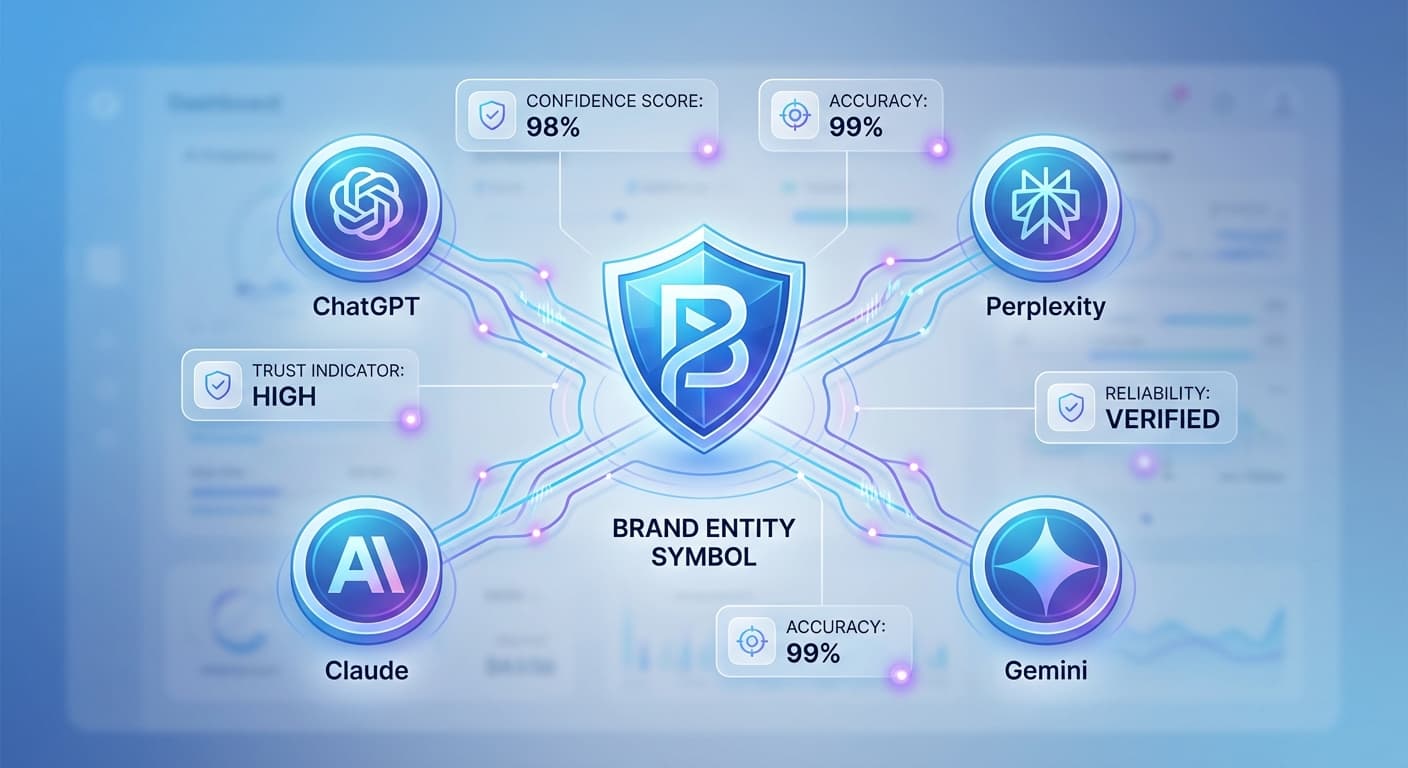

While managing your LLMs.txt file controls which crawlers can access your content, ensuring that content is optimized for AI citation is equally important. Citescope Ai's GEO Score analyzes your content across five key dimensions that AI engines prioritize: AI Interpretability, Semantic Richness, Conversational Relevance, Structure, and Authority.

The platform's Citation Tracker monitors when your content gets cited by ChatGPT, Perplexity, Claude, and Gemini, helping you understand which pages are successfully attracting AI attention and which might need optimization. This data becomes invaluable when making LLMs.txt decisions – you can see exactly which content is driving citations and ensure those pages remain accessible to the right crawlers.

Common Pitfalls to Avoid

Over-Optimization for Single Platforms

Don't optimize your crawler strategy for just one AI platform. The AI search landscape is rapidly evolving, and today's leading platform might not dominate tomorrow.

Ignoring Crawl Budget

Even with proper LLMs.txt configuration, AI crawlers can consume significant crawl budget. Monitor your important pages to ensure they're being indexed regularly by traditional search engines.

Set-and-Forget Mentality

AI crawler behavior and server infrastructure change frequently. What works today might not work in six months. Regular audits are essential.

Future-Proofing Your Strategy

As AI search continues to evolve, consider:

Ready to Optimize for AI Search?

Managing AI crawlers is just one piece of the AI visibility puzzle. To maximize your citations across ChatGPT, Perplexity, Claude, and Gemini, you need content that's specifically optimized for how AI engines understand and reference information.

Citescope Ai helps you optimize your content for AI search engines, track citations across all major platforms, and export optimized content in multiple formats. Start with our free tier (3 optimizations per month) to see how AI-optimized content performs, or upgrade to Pro ($39/month) for unlimited optimizations and advanced citation tracking.

Try Citescope Ai free today and transform your content into citation-worthy material that AI engines love to reference.