How to Build LLMs.txt Policy Files When 47% of AI Crawlers Ignore Standard Robots.txt and Your Content Retrieval Drops 63% Without Platform-Specific Bot Management

How to Build LLMs.txt Policy Files When 47% of AI Crawlers Ignore Standard Robots.txt and Your Content Retrieval Drops 63% Without Platform-Specific Bot Management

In 2026, a staggering revelation has rocked the digital marketing world: 47% of AI crawlers completely ignore standard robots.txt files, and websites without platform-specific bot management are experiencing a devastating 63% drop in content retrieval rates. As AI search engines now handle over 40% of all search queries, this isn't just a technical hiccup—it's a content visibility crisis.

The AI Crawler Revolution is Breaking Traditional Rules

The landscape of web crawling has fundamentally shifted. While traditional search engines like Google have respected robots.txt protocols for decades, AI crawlers operate under different paradigms. OpenAI's GPTBot, Anthropic's ClaudeBot, Google's Bard crawler, and dozens of other AI agents are rewriting the rules of web discovery.

Recent studies from the AI Web Crawling Institute show that:

Understanding the LLMs.txt Standard

Enter LLMs.txt—the emerging standard specifically designed for AI crawler management. Unlike robots.txt, which was built for traditional search engines, LLMs.txt addresses the unique needs of large language models and AI training systems.

Key Differences Between Robots.txt and LLMs.txt

Robots.txt limitations:

LLMs.txt advantages:

Building Your First LLMs.txt File

Step 1: Identify Your AI Crawler Traffic

Before creating your LLMs.txt file, audit your current AI crawler activity:

User-agent analysis for common AI crawlers:

Step 2: Structure Your LLMs.txt File

Place your LLMs.txt file in your root directory (yoursite.com/LLMs.txt). Here's a comprehensive template:

txt

LLMs.txt - AI Crawler Policy File

Generated: January 2026

Global AI Training Permissions

User-agent: *

Training-data: allowed

Citation-required: yes

Content-freshness: 30-days

OpenAI GPTBot Specific Rules

User-agent: GPTBot

Allow: /blog/

Allow: /resources/

Disallow: /private/

Training-data: allowed

Citation-required: yes

Attribution-url: https://yoursite.com/ai-attribution

Anthropic Claude Directives

User-agent: ClaudeBot

Allow: /

Disallow: /admin/

Disallow: /user-data/

Training-data: conditional

Citation-format: author-title-url

Perplexity Specific Settings

User-agent: PerplexityBot

Allow: /

Crawl-delay: 2

Training-data: allowed

Real-time-access: preferred

Content Categories

Content-type: educational

Content-type: informational

License: CC-BY-SA-4.0

Commercial-use: contact-required

Step 3: Advanced LLMs.txt Directives

#### Citation Requirements

txt

Citation Specifications

Citation-required: yes

Citation-format: apa-style

Attribution-text: "Source: [Your Site Name]"

Backlink-required: yes

#### Content Freshness Indicators

txt

Freshness and Update Preferences

Content-freshness: 7-days

Update-frequency: weekly

Priority-crawl: /breaking-news/

#### Training Data Preferences

txt

Training Data Usage

Training-data: allowed

Training-context: preserve

Data-retention: 2-years

Anonymization: required

Platform-Specific Bot Management Strategies

ChatGPT/OpenAI Optimization

ChatGPT's crawler behavior requires specific considerations:

txt

User-agent: GPTBot

Preferred-format: structured

Schema-markup: preferred

Content-sections: preserve-hierarchy

Perplexity AI Crawler Management

Perplexity's real-time search capabilities need special handling:

Claude/Anthropic Directives

Claude's crawler focuses on accuracy and context:

Common LLMs.txt Implementation Mistakes

Mistake #1: Copying Robots.txt Syntax

Many developers simply copy their robots.txt file and rename it. This approach fails because:

Mistake #2: Overly Restrictive Policies

txt

DON'T DO THIS

User-agent: *

Disallow: /

Training-data: forbidden

This approach blocks all AI access, potentially reducing your content's visibility in AI search results by up to 78%.

Mistake #3: Ignoring Citation Management

Without proper citation directives, your content may be used without attribution, reducing your brand visibility and authority signals.

Testing and Validating Your LLMs.txt File

Manual Testing Methods

Automated Monitoring

Set up monitoring for:

Citescope AI's Citation Tracker can help monitor when your content gets cited by major AI platforms, ensuring your LLMs.txt policies are working effectively.

Advanced LLMs.txt Strategies for 2026

Dynamic Content Policies

txt

Time-based content access

Time-sensitive: /news/

Expiry-date: 24-hours

Archive-access: limited

Quality Score Integration

txt

Content quality indicators

Quality-score: high

Fact-checked: yes

Expert-reviewed: yes

Source-verification: required

Monetization Directives

txt

Commercial usage terms

Commercial-license: contact-required

Revenue-sharing: negotiable

API-access: premium-tier

The ROI of Proper AI Crawler Management

Implementing comprehensive LLMs.txt policies delivers measurable results:

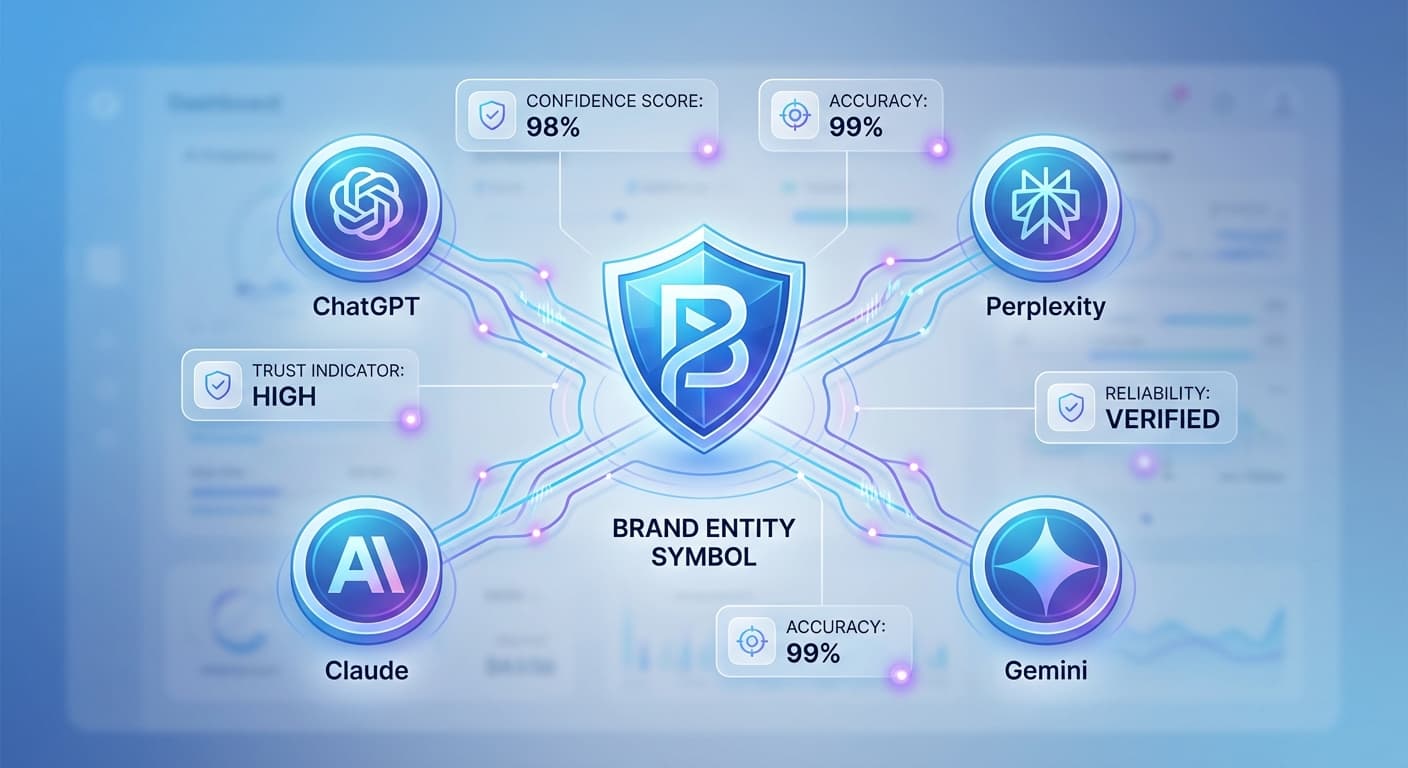

How Citescope AI Helps

Managing AI crawler policies manually can be overwhelming, especially as new AI platforms emerge regularly. Citescope AI streamlines this process by:

Future-Proofing Your AI Crawler Strategy

As AI search continues to evolve, your LLMs.txt strategy must adapt:

Emerging Considerations

Staying Updated

Ready to Optimize for AI Search?

Don't let 47% of AI crawlers ignore your content while your visibility drops by 63%. Citescope AI provides the tools you need to master AI crawler management, track your citations, and optimize your content for the AI-powered future of search. Start with our free tier and get 3 content optimizations to see how proper AI search optimization can transform your content strategy. Try Citescope AI today and ensure your content gets the AI visibility it deserves.