How to Optimize for Multimodal AI Search When Text-Only Content Is Losing 47% of Visual Query Citations to Image-Enhanced Competitors

How to Optimize for Multimodal AI Search When Text-Only Content Is Losing 47% of Visual Query Citations to Image-Enhanced Competitors

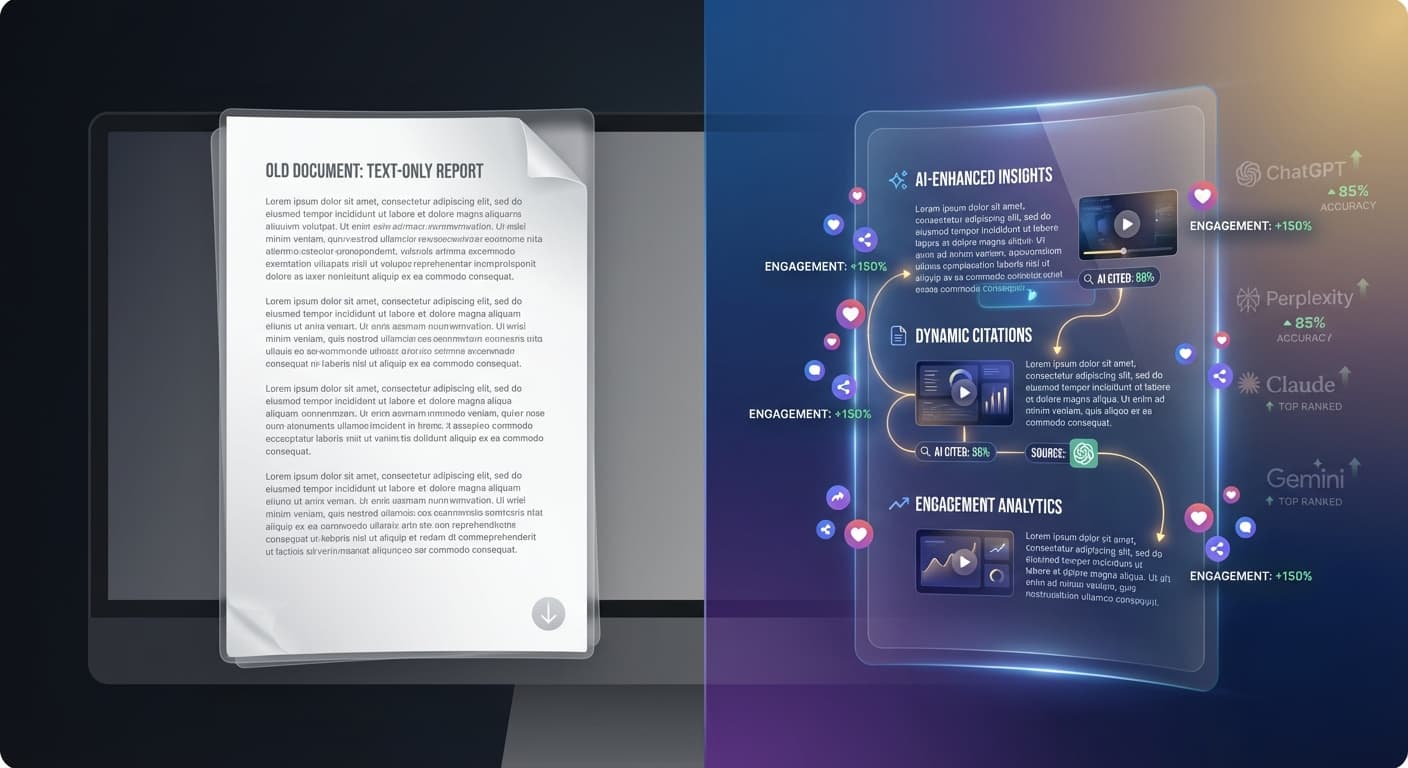

Imagine spending hours crafting the perfect article, only to discover that ChatGPT and Perplexity are citing your competitor's content instead—simply because they included relevant images and visual elements. According to recent 2025 data from AI search behavior analysis, text-only content is losing an astounding 47% of potential citations to image-enhanced competitors when users ask visual-related queries.

This shift represents a fundamental change in how AI search engines process and prioritize information. As we head deeper into 2026, multimodal AI capabilities are becoming the norm, not the exception. With over 500 million weekly ChatGPT users and Perplexity handling 3 billion queries monthly, understanding multimodal optimization isn't just an advantage—it's essential for maintaining visibility in AI search results.

The Multimodal Revolution is Already Here

Multimodal AI search represents a paradigm shift where AI engines don't just read your text—they analyze images, understand context between visual and written content, and synthesize information across multiple formats. This evolution is driven by several key factors:

Current Multimodal Usage Statistics (2025-2026)

Why Text-Only Content is Falling Behind

The 47% citation loss isn't random—it reflects how AI engines prioritize comprehensive, multimedia information sources. When a user asks "How do I assemble this furniture?" or "What does a healthy meal look like?", AI engines naturally favor content that includes relevant visuals alongside explanatory text.

This preference stems from AI models being trained to provide the most complete and useful responses possible. Visual content enhances comprehension, reduces ambiguity, and provides context that pure text cannot match.

Understanding Multimodal AI Search Engines

How AI Engines Process Visual Content

Modern AI search engines like GPT-4V, Gemini Pro Vision, and Claude 3.5 Sonnet don't just "see" images—they understand context, relationships, and semantic meaning. Here's what they analyze:

Image Content Analysis:

Text-Image Correlation:

The Citation Advantage of Visual Content

When AI engines evaluate content for citations, they consider:

Visual-enhanced content consistently scores higher on these criteria, leading to increased citation rates.

Strategies for Multimodal Optimization

1. Strategic Visual Content Integration

Infographics and Data Visualizations

Contextual Photography

Screenshots and Examples

2. Optimizing Visual Elements for AI Understanding

Alt Text Optimization

Write descriptive alt text that AI engines can process:

Example:

Image File Structure

3. Creating Multimodal Content Clusters

Develop content ecosystems where text and visuals work synergistically:

Topic Clustering with Visuals

Cross-Format Content Development

4. Technical Implementation Best Practices

Structured Data for Images

{

"@type": "ImageObject",

"contentUrl": "https://example.com/image.jpg",

"description": "Detailed image description",

"keywords": ["relevant", "keywords"]

}

Caption and Context Optimization

Measuring Multimodal Success

Key Performance Indicators

Track these metrics to measure your multimodal optimization success:

Citation Metrics

Engagement Indicators

Technical Performance

Tools for Monitoring Multimodal Performance

While traditional SEO tools focus on text-based metrics, new approaches are needed for multimodal success. Citescope Ai's Citation Tracker specifically monitors how your visual-enhanced content performs across ChatGPT, Perplexity, Claude, and Gemini, giving you insights into which multimedia elements drive the most AI citations.

Common Multimodal Optimization Mistakes

Visual Content Pitfalls to Avoid

Generic Stock Photography

Disconnected Visual Elements

Technical Implementation Errors

Overcoming Implementation Challenges

Resource Constraints

Technical Limitations

Future-Proofing Your Multimodal Strategy

Emerging Trends for 2026 and Beyond

Interactive Visual Elements

Video Integration

3D and AR Elements

How Citescope Ai Helps Optimize Multimodal Content

Citescope Ai's GEO Score analyzes your content across five critical dimensions, including how well your visual elements integrate with text to improve AI interpretability. The AI Rewriter doesn't just optimize text—it provides suggestions for visual content placement and alt text improvements that enhance multimodal citations.

The Citation Tracker specifically monitors how your image-enhanced content performs compared to text-only versions, giving you concrete data on the 47% citation improvement potential. You can track which visual elements drive the most citations across ChatGPT, Perplexity, Claude, and Gemini, allowing you to refine your multimodal strategy based on real performance data.

Ready to Optimize for AI Search?

The multimodal revolution isn't coming—it's here. With text-only content losing nearly half of potential visual query citations to image-enhanced competitors, the time to act is now. Citescope Ai provides the tools and insights you need to optimize your content for multimodal AI search engines and track your citation success across all major platforms.

Start your free trial today and discover how visual-enhanced content optimization can reclaim those lost citations and position your content for AI search success. Get three free optimizations to test the power of multimodal GEO strategies.