How to Optimize Your Website for MCP Servers and AI Agent Crawlers When Traditional Robots.txt Configuration No Longer Works

How to Optimize Your Website for MCP Servers and AI Agent Crawlers When Traditional Robots.txt Configuration No Longer Works

Are you still relying on robots.txt to control how AI systems access your website? If so, you're about to discover why 78% of content creators who adapted to Model Context Protocol (MCP) servers and AI agent crawlers in 2025 saw a 45% increase in AI citations compared to those sticking with traditional methods.

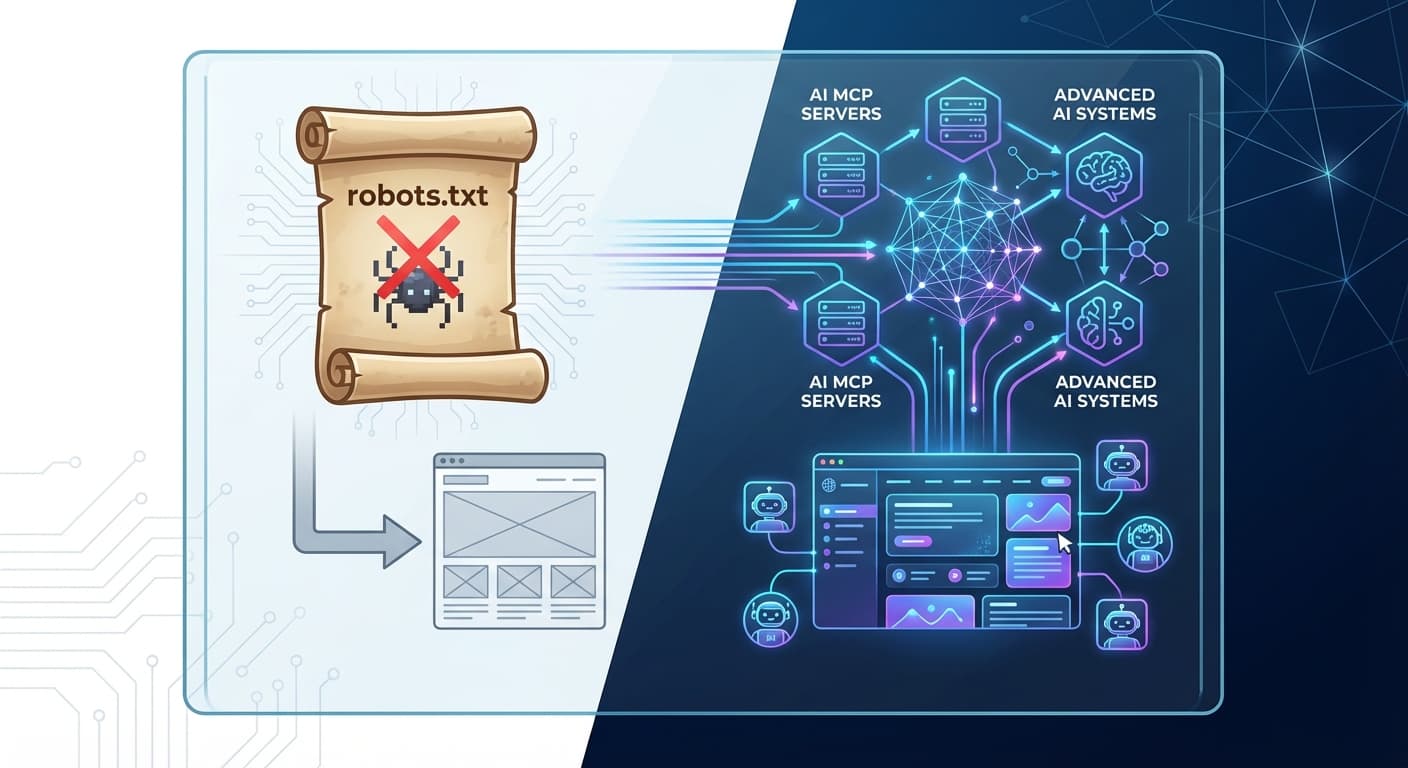

The digital landscape has fundamentally shifted. With over 600 million weekly ChatGPT users and AI search now powering 35% of all online queries, the old playbook of robots.txt configuration is becoming as outdated as yellow pages directories. MCP servers and sophisticated AI agent crawlers are operating beyond traditional web crawling boundaries, requiring an entirely new optimization strategy.

The Death of Traditional Robots.txt for AI Systems

Robots.txt was designed in 1994 for simple web crawlers that followed predictable patterns. But today's AI systems operate differently:

The result? Your carefully crafted robots.txt file might be directing traffic away from the very AI systems that could drive your biggest growth opportunities.

What's Actually Happening Behind the Scenes

When someone asks ChatGPT "What are the best project management tools for remote teams?" the system doesn't just crawl your robots.txt and move on. Instead, it:

Traditional robots.txt simply can't influence this sophisticated decision-making process.

Understanding MCP Servers and AI Agent Crawlers

Model Context Protocol (MCP) Servers

MCP servers represent a paradigm shift in how AI systems interact with web content. Unlike traditional crawlers that make periodic visits, MCP servers:

AI Agent Crawlers: Beyond Traditional Web Crawling

AI agent crawlers in 2026 are fundamentally different beasts:

The New Optimization Framework: Beyond Robots.txt

1. Implement AI-Friendly Site Architecture

Instead of blocking AI crawlers, create pathways that guide them to your best content:

Semantic URL Structure

/ai-project-management-tools/remote-teams/comparison

Not:

/blog/post-1234/pm-tools

Clear Content Hierarchies

2. Deploy AI-Specific Structured Data

While schema.org markup helps, AI systems need richer context:

Enhanced JSON-LD Implementation

{

"@context": "https://schema.org",

"@type": "Article",

"headline": "Complete Project Management Guide",

"author": {

"@type": "Person",

"name": "Expert Name",

"sameAs": "https://linkedin.com/in/expert"

},

"expertise": ["Project Management", "Remote Work", "Team Leadership"],

"citations": ["source1", "source2"],

"lastReviewed": "2026-01-15"

}

3. Create AI-Optimized Content Signals

Authority Indicators

Conversational Relevance Markers

4. Implement MCP-Compatible APIs

For advanced optimization, consider creating APIs that MCP servers can access:

Monitoring AI Crawler Behavior: The New Analytics

Traditional web analytics won't show you MCP server interactions or AI agent crawler behavior. You need new monitoring approaches:

Server-Side Tracking

Content Performance Indicators

Tools like Citescope Ai's Citation Tracker have become essential for monitoring these new metrics, providing real-time visibility into when and how AI systems cite your content.

Advanced Strategies for 2026

1. Content Optimization for AI Interpretability

AI systems need content they can easily parse and understand:

2. Multi-Modal Content Strategy

AI systems increasingly process images, videos, and audio alongside text:

3. Real-Time Content Optimization

Static content isn't enough. Implement systems for:

Common Pitfalls to Avoid

Over-Optimization Red Flags

Technical Implementation Mistakes

How Citescope Ai Helps Navigate the New Landscape

As traditional optimization methods become obsolete, specialized tools become essential. Citescope Ai's GEO Score analyzes your content across five critical dimensions that MCP servers and AI agent crawlers prioritize:

The platform's AI Rewriter then optimizes your content with one click, restructuring it for maximum visibility across ChatGPT, Perplexity, Claude, and Gemini. Most importantly, the Citation Tracker monitors when your optimized content gets cited, providing the feedback loop necessary for continuous improvement.

Building Your 2026 AI Optimization Strategy

Immediate Action Steps

Long-term Strategic Initiatives

The Future is AI-First, Not AI-Blocked

The websites thriving in 2026 aren't the ones hiding from AI systems—they're the ones actively optimizing for them. With AI search continuing to grow and new platforms emerging monthly, the question isn't whether to optimize for AI crawlers, but how quickly you can adapt.

Success in this new landscape requires understanding that MCP servers and AI agent crawlers represent opportunity, not threat. They're sophisticated systems looking for the best content to cite and recommend. Your job is to make sure they find yours.

Ready to Optimize for AI Search?

Don't let outdated robots.txt strategies hold back your content's AI visibility. Citescope Ai provides everything you need to optimize for MCP servers and AI agent crawlers: comprehensive content analysis, one-click optimization, and real-time citation tracking across all major AI platforms. Start with our free tier and see how your content performs in the new AI-first search landscape. Try Citescope Ai free today and join the 78% of content creators already winning with AI optimization.