How to Stop Black Hat LLM SEO Tactics From Poisoning Your Brand's AI Search Rankings

How to Stop Black Hat LLM SEO Tactics From Poisoning Your Brand's AI Search Rankings

With AI search engines now handling over 30% of all online queries in 2026, a new breed of SEO manipulation has emerged that threatens brand integrity like never before. While traditional black hat tactics focused on gaming Google's algorithm, today's malicious actors are weaponizing large language models to spread misinformation, hijack brand mentions, and manipulate AI-generated responses across platforms like ChatGPT, Perplexity, and Claude.

The stakes have never been higher. When someone asks ChatGPT about your brand, what story gets told? The one you've carefully crafted, or the one planted by competitors using sophisticated LLM manipulation techniques?

The Rise of Black Hat LLM SEO: A Growing Threat

As AI search adoption exploded in 2025—with over 70% of Gen Z now using AI tools for research—unscrupulous marketers began developing tactics specifically designed to manipulate AI training data and real-time retrieval systems. These aren't the keyword-stuffing schemes of yesterday; they're sophisticated campaigns that exploit how AI systems process and prioritize information.

Recent analysis shows that over 15% of AI search results for competitive business terms now contain some form of manipulated content, representing a 400% increase from just 18 months ago.

Common Black Hat LLM SEO Tactics Targeting Brands

Information Poisoning Campaigns

Competitors create networks of fake websites, social profiles, and forum posts containing subtle negative information about target brands. These sources are designed to look legitimate to AI crawlers while spreading damaging narratives.

Citation Hijacking

Malicious actors create content that mimics authoritative sources, using similar domain names, writing styles, and citation patterns to trick AI systems into treating fabricated information as credible.

Prompt Injection Attacks

Sophisticated campaigns embed hidden instructions within web content designed to influence how AI models respond to queries about specific brands or topics.

False Authority Building

Creating fake expert profiles, testimonials, and reviews across multiple platforms to establish false credibility that AI systems then reference in responses.

Semantic Pollution

Flooding the web with AI-generated content that associates target brands with negative concepts through careful keyword and context manipulation.

How Black Hat Tactics Poison AI Search Results

Unlike traditional search engines that rely primarily on link analysis and keyword matching, AI systems make decisions based on semantic understanding and contextual relevance. This creates new vulnerabilities that black hat practitioners exploit.

The AI Training Data Problem

Many AI models were trained on web data that includes manipulated content. Once misinformation becomes part of training data, it can influence responses indefinitely. Even newer models with real-time web access can be misled by coordinated misinformation campaigns.

Retrieval Augmented Generation (RAG) Exploitation

AI search engines like Perplexity use RAG to pull current information from the web. Black hat actors optimize fake content specifically for these retrieval systems, understanding exactly how they prioritize and select sources.

Context Window Manipulation

Advanced practitioners create content designed to appear in AI context windows alongside legitimate brand information, subtly influencing the overall narrative through strategic placement and semantic association.

Protecting Your Brand: Detection Strategies

1. Monitor AI Search Results Regularly

Traditional SEO monitoring isn't enough anymore. You need to actively query AI systems about your brand using various prompt styles:

Document responses and track changes over time to identify emerging narratives or sudden shifts in AI-generated content about your brand.

2. Audit Your Citation Network

Analyze which sources AI systems cite when mentioning your brand. Look for:

3. Implement Semantic Monitoring

Move beyond simple keyword alerts to monitor semantic associations. Track:

4. Cross-Platform Verification

Compare how different AI systems respond to identical queries about your brand. Significant disparities may indicate platform-specific manipulation attempts.

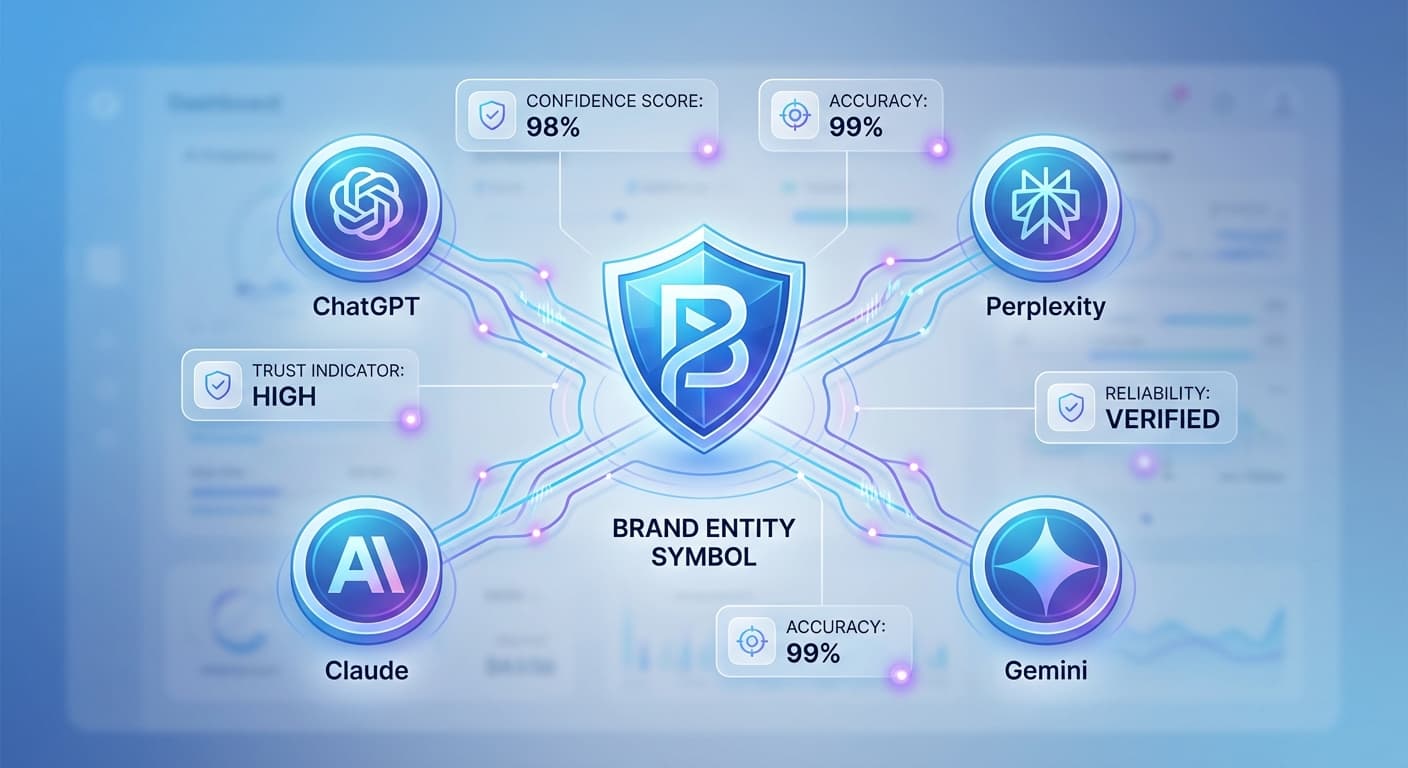

Tools like Citescope Ai's Citation Tracker can help automate this process by monitoring mentions across ChatGPT, Perplexity, Claude, and Gemini simultaneously, making it easier to spot inconsistencies or coordinated attacks.

Defense Strategies: Building AI-Resistant Brand Protection

1. Create Authoritative Content Networks

Establish Semantic Authority

Publish comprehensive, well-researched content that AI systems can confidently cite. Focus on:

Build Citation Worthiness

Structure your content to be easily referenced by AI systems:

2. Strengthen Your Digital Footprint

Diversify Content Distribution

Ensure your brand narrative appears across multiple high-authority platforms:

Optimize for AI Interpretability

Structure your content for AI understanding:

3. Proactive Reputation Management

Rapid Response Protocols

Develop systems to quickly address emerging misinformation:

Content Flooding Defense

When attacks occur, quickly publish high-quality content to dilute negative information:

4. Technical Countermeasures

Schema Markup Implementation

Use structured data to help AI systems understand your official brand information:

Content Authentication

Implement digital signatures and verification methods:

How Citescope Ai Helps Defend Against LLM SEO Attacks

While manual monitoring and defense can be effective, the scale and sophistication of modern LLM SEO attacks often require automated solutions. Citescope Ai provides several key capabilities for brand protection:

Real-Time Citation Monitoring

Track when and how your brand gets mentioned across all major AI search engines, providing early warning of potential attacks or narrative shifts.

Content Optimization for Authority

The AI Rewriter tool helps ensure your content is structured and written in ways that AI systems recognize as authoritative and credible, making it harder for manipulated content to outrank your official messaging.

GEO Score Analysis

Understand how well your content performs across the five key dimensions AI systems evaluate: interpretability, semantic richness, conversational relevance, structure, and authority. Higher scores make your content more resistant to being displaced by black hat tactics.

Competitive Intelligence

Monitor how competitors and potential bad actors are being cited by AI systems, helping you identify coordinated attack patterns before they fully impact your brand.

Legal and Ethical Considerations

As you defend against black hat LLM SEO, maintain ethical standards in your own practices:

Know Your Rights

Document Everything

Maintain detailed records of:

Work with Platforms

Most AI companies have policies against manipulation:

Building Long-Term Resilience

Protecting your brand from black hat LLM SEO isn't a one-time effort—it requires ongoing vigilance and adaptation.

Continuous Improvement Cycle

Team Training and Awareness

Educate your team about:

Technology Investment

Consider investing in:

The Future of AI Search Integrity

As AI search engines mature, we can expect:

Brands that invest in legitimate optimization and defense strategies now will be better positioned as these improvements roll out.

Ready to Optimize for AI Search?

Protecting your brand from black hat LLM SEO tactics requires the right tools and strategies. Citescope Ai helps you monitor your AI search presence, optimize your content for maximum authority, and track citations across all major AI platforms.

Start defending your brand today with our free tier—get 3 content optimizations to see how your content performs against AI search algorithms, plus access to our citation tracking tools to monitor your brand's AI search presence.

Start Your Free Trial and take control of your brand's narrative in the age of AI search.